Selling and marking medical devices in European Union (EU) countries requires them to bear the CE marking. Medical device manufacturers must comply with regulatory requirements to ensure their products meet quality and safety standards. International regulations and standards related to medical devices, such as the European MDR 2017/745 and ISO 13485:2016, can be complex but are essential. Non-compliance with these regulations and standards may result in manufacturers ceasing production altogether. In this article, we provide a guide to understanding the key regulations for obtaining the CE marking.

What is the CE marking?

The CE marking stands for Conformité Européenne (European Conformity in French). As the name suggests, it certifies that a product complies with European requirements ensuring safety, health, and environmental protection standards. While this marking is required for many products, for medical devices, the CE marking allows companies to distribute and sell their devices across the 30 countries in the European Economic Area (EEA). This is possible once they comply with the European Regulation 2017/745, also known as the Medical Device Regulation (MDR). This regulation governs the placement of medical devices on the Markin get for human use. Therefore, the presence of the CE letters on medical devices signifies that the product meets all legal requirements for distribution throughout the EEA.

Why is the CE marking Important for Medical Devices?

All medical devices that comply with European legislation can obtain the CE marking. The CE marking indicates that a medical device has undergone risk assessment procedures and is a safe and high-quality product for patients.

A product cannot be legally sold or markingeted in EEA countries without this marking—except for devices exclusively used for research purposes, which are an exception. Moreover, compliance with this regulation provides opportunities for many companies worldwide to expand their business.

For example, during the COVID-19 pandemic, numerous infrared thermometers entered the European markinget, regardless of the manufacturer’s country of origin. The CE marking indicated that these devices complied with the requirements and could be legally sold and markingeted in EEA countries.

Here are some key benefits of obtaining the CE marking:

- Confirms that your device meets the essential legal requirements of the EU.

- Allows markingeting in all 30 EEA member countries.

- Some non-EEA countries recognize the CE marking, providing an advantage in entering new markingets.

- Demonstrates compliance with safety and quality standards.

Standards and Regulations for CE markinging

Understanding the regulations and standards related to medical devices is crucial. Below, we outline the key international standards and regulations necessary for a better understanding of the CE markinging process.

1. Regulation (EU) 2017/745

Regulation (EU) 2017/745, also known as the European Medical Device Regulation (MDR), is the current regulation replacing the previous Medical Device Directive (MDD) and the Active Implantable Medical Devices Directive (AIMD) entirely.

2. Directive 2001/83/EC

Directive 2001/83/EC concerns the placement of medicinal products for human use on the markinget. When medical devices are combined with a medicinal product, such as a drug, manufacturers must determine which component is responsible for the primary function of the combined product.

If the drug enhances the activity of the medical device and cannot be used separately, it becomes an integral part of the device. The combined product is then classified as a medical device and must comply with Regulation (EU) 2017/745.

3. Regulation (EC) 276/2004

Regulation (EC) 276/2004 concerns the placement of medicinal products for human and veterinary use on the markinget. For medical devices, it functions similarly to Directive 2001/83/EC.

4. Directive 2004/23/EC

Directive 2004/23/EC establishes quality and safety standards for the donation, procurement, testing, processing, preservation, storage, and distribution of human tissues and cells. Medical devices containing non-viable tissues or cells with a secondary function must comply with MDR.

The general safety and performance requirements in the MDR must be applied to the part of the device containing these elements, regardless of their primary function.

5- ISO 13485:2016

The ISO 13485:2016 standard defines the requirements for a quality management system (QMS) for medical devices. Medical device manufacturers often adhere to this standard, as compliance with it is assumed to align with the QMS requirements in the MDR.

Compliance with this standard ensures adherence to quality management system requirements, including:

- Quality manual

- Document and record control

- Quality management system

- Human resources

- Facility structure

- Contamination control

- Design, development, and transfer planning

- Medical device files

- Supplier evaluation and selection

- Service activities

- Sterile medical device requirements

- Medical device identification and traceability

- Complaint handling

- Nonconforming product control

- Post-markinget surveillance

6- ISO 14971:2019

ISO 14971:2019 is specifically developed for medical device manufacturers based on principles for applying risk management to medical devices. It serves as a guide for developing and maintaining risk management processes.

Risk management is a requirement in the MDR. However, manufacturers can achieve compliance without necessarily obtaining certification under this standard.

7- FDA 21 CFR Part 820

FDA 21 CFR Part 820 outlines the quality system requirements applicable to medical device manufacturers. Companies aiming to enter the U.S. markinget must have a quality management system (QMS) that complies with FDA 21 CFR Part 820 and obtain FDA approval.

This standard can serve as a guide for MDR quality management system requirements in the European markinget. However, most companies opt to follow ISO 13485:2016, as certification for it can be obtained.

Steps to Obtain the CE marking for Medical Devices

The process of obtaining a CE marking can be somewhat complex. To assist you, this guide outlines the general steps to acquire it.

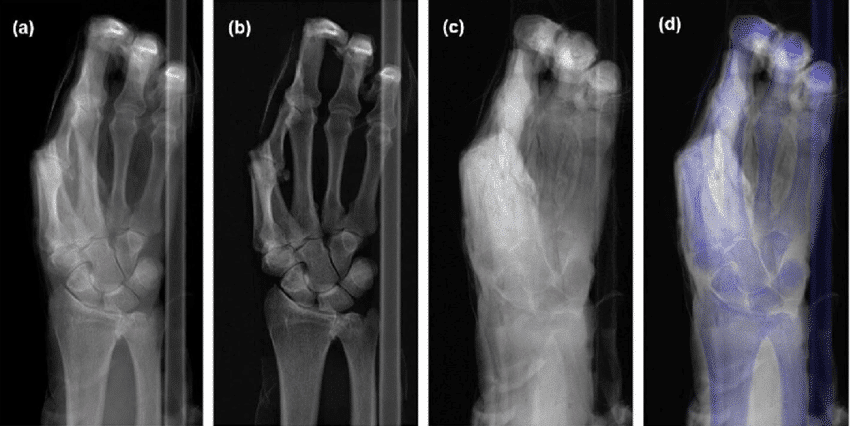

1. Determine the Medical Device Classification

Identify the classification guidelines set by the MDR based on risk level, body placement, and duration of use.

Risk: Devices are categorized into Class I, IIa, IIb, and III. The higher the class, the greater the risk posed to the patient.

Body placement: Devices can be non-invasive (on the body’s surface) or invasive (penetrating the body).

- Duration of use: Devices are classified as:

- Transient use: Up to 60 minutes.

- Short-term use: Up to 30 days.

- Long-term use: More than 30 days.

2. Appoint a Person Responsible for Regulatory Compliance (PRRC)

Medical device manufacturers must designate at least one individual responsible for regulatory compliance within the company. This person should have expertise in the medical device field.

3. Implement a Quality Management System and Risk Management

The MDR requires manufacturers to have quality management and risk management systems in place. This is why medical device manufacturers choose to comply with ISO 13485:2016, as it is presumed to align with MDR requirements for a quality management system.

4. Prepare Technical Documentation

Technical documentation, also known as medical device technical files, contains detailed information about the lifecycle of your medical device and is a requirement under the MDR.

5. Implement a Supplier Management System

The MDR requires medical device companies to have a supplier management system in place. Suppliers must be audited to ensure compliance with requirements and standards. Creating a list of approved suppliers based on predefined criteria is useful to ensure that only qualified suppliers provide products and services.

6. Conduct a Clinical Evaluation

Manufacturers must conduct a clinical evaluation to demonstrate compliance with safety and performance requirements. In practice, this means developing a plan to collect and analyze clinical data from relevant scientific literature and clinical investigations involving the specific medical device or an equivalent product.

7. Appoint an Authorized Representative in Europe (if required)

If the medical device manufacturer is not based in the European Economic Area (EEA), they must appoint an authorized representative within an EEA member state.

The authorized representative is responsible for tasks such as:

- Verifying technical documentation

- Informing the manufacturer of complaints

- Registering a physical location for the notified body to receive device samples for inspection

8. Obtain Certification from a Notified Body

A notified body is an independent organization responsible for assessing product compliance before it is placed on the markinget. For medical devices, the notified body audits manufacturers and issues certifications confirming compliance with the MDR.

For higher-risk medical devices, this certification is mandatory, specific to each procedure, and valid for a maximum of five years. After this period, the notified body will audit the manufacturer’s quality management system (QMS) and technical documentation to verify continued compliance with the MDR.

9. Prepare a Declaration of Conformity

After obtaining certification from the notified body, manufacturers must prepare a Declaration of Conformity (DoC), taking responsibility for ensuring that the device meets the specific requirements of the MDR.

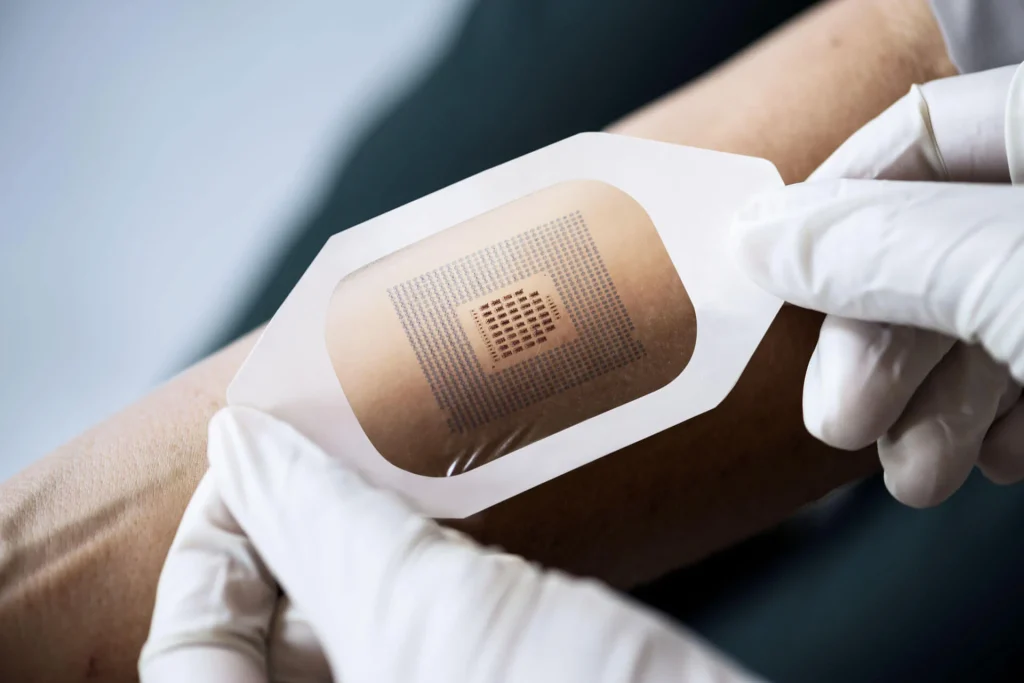

10. Register Your Device with a Unique Device Identifier (UDI)

To facilitate the traceability of medical devices, each device model must be assigned a Unique Device Identifier (UDI).

The UDI is a unique numeric or alphanumeric code stored in the European Database on Medical Devices (EUDAMED), where essential information about the device can be accessed. The UDI is an additional requirement and does not replace the CE marking or other labeling requirements.

11. Affix the CE marking on the Medical Device

After obtaining approval from national authorities and certification from the notified body, manufacturers can place the CE marking on their medical devices.

The CE marking must be displayed on:

- The device itself

- The packaging

- Any instructions for use

It is essential that the CE marking is visible, legible, and made of a material that cannot be removed or washed off.

For Class II and Class III medical devices, the four-digit number of the notified body must also be printed next to the CE marking.

12. Maintain Post-markinget Surveillance

Before obtaining the CE marking and placing a medical device on the European Economic Area (EEA) markinget, manufacturers must demonstrate that a post-markinget surveillance (PMS) system has already been implemented to address safety and effectiveness concerns.

Medical device companies must collect data on their sold devices through post-markinget surveillance, vigilance activities, and markinget monitoring plans.

This includes feedback related to patient experience with the medical device and the product lifecycle.

Manufacturer Requirements Include:

- Monitoring complaints, adverse events, and non-conformities

- Regularly updating safety reports

- Conducting internal and supplier audits regularly

- Keeping technical documentation, databases, and records up to date

This surveillance ensures proactive actions to collect and review real-world evidence on device quality and safety. As a result, manufacturers can better handle customer complaints, identify risks, and implement product recalls and implementing product recalls and other market actions.

Frequently Asked Questions About the CE marking

Here are some common questions about the CE marking for medical devices, which ensures compliance with safety, health, and environmental protection standards.

1- Is the CE marking the same as FDA approval?

Both the European CE marking and the U.S. Food and Drug Administration (FDA) approval aim to evaluate the safety and effectiveness of medical devices. However, they are only valid in their respective markingets.

2- How long is the CE marking valid?

The validity of the CE marking is determined by the notified body and depends on the classification of the medical device. However, it cannot exceed five years. After that, the device must undergo recertification.

For example, a Class IIa device may receive certification valid for only three years. Additionally, annual surveillance audits are conducted between certification renewals.

3- Can a CE marking be placed on a medical device product?

Class I medical devices that are non-sterile and non-measuring can self-declare compliance. However, higher-class devices must be assessed by notified bodies to obtain the CE marking.

4- How long does it take to get CE marking approval?

The approval timeline for the CE marking varies depending on the device’s classification and complexity, as well as whether the manufacturer already has a certified Quality Management System (QMS) under ISO 13485:2016. Generally, obtaining CE marking approval takes between 16 to 18 months from start to finish.

5- How many notified bodies are designated for CE markinging?

According to the NANDO database, as of 2022, there were 34 notified bodies accredited for MDR and 7 for IVDR.

Source: CE markinging for Medical Devices [Step-by-Step Guide]